Totally wired for the future

An out-of-your-mind infrastructure for the next generation of scholars, scientists and engineers.

What kind of network infrastructure do you need to support the study of aquifer systems and subterranean fluid flow? How about weather and oceanographic modeling? Spatial models of astrophysics on hypothetical supernova collapse? The big bang theory?

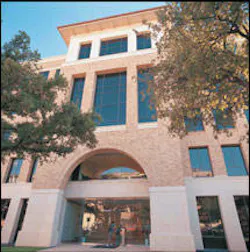

The Applied Computational Engineering and Sciences (ACES) building at the University of Texas at Austin is on the leading edge of academic computational facilities, with state-of-the-art equipment and systems. Donated by the O'Donnell Foundation of Dallas, the facility provides an unparalleled environment for interdisciplinary research and graduate study in computational science and engineering, mathematical modeling, applied mathematics, software engineering and computer visualization.

To train the next generations of scholars, scientists and engineers, developers of the ACES building had to plan for the future. While the network infrastructure would certainly accommodate today's high-bandwidth data applications, the building's benefactor had at least three goals to ensure that the building would be wired for the future, including: capacity for growth; the ability to upgrade quickly and economically; and the flexibility to handle unforeseen changes and developments in future technology.

"That means that the building's network infrastructure had to be wired to the gills," says Eric Hepburn, coordinator of network services for the ACES building.

The backbone

The voice and data network provides the infrastructure for all voice and data communications within the building. There are more than 1.6 million feet (300 miles) of cable in the ACES building, including:

- 320,000 feet (60 miles) of Avaya LazrSpeed multimode and OptiSpeed singlemode optical-fiber cable, with 10 Gbits/sec service to every desktop (1,100) in the building;

- 870,000 feet (165 miles) of Avaya Systimax SCS GigaSpeed cable distributed to more than 3,000 data ports;

- 425,000 feet (80 miles) of Avaya Systimax SCS Giga Speed cable for more than 1,500 voice connections.

All optical-fiber and copper cables originate in the building's main distribution facility (MDF) to locations throughout the building, with a distribution trunk from the MDF to the university's central telecommunications facility, UT Telecom. A wireless network provides mobile network access to laptop users in and around the building.

Most offices have six copper data cables, three copper voice cables, one pair of multimode fiber and one pair of singlemode fiber. Larger offices typically contain eight copper data cables, four copper voice cables, two pairs of multimode fiber and two pairs of singlemode fiber.

Network cable for laboratory spaces depends on the linear feet of raceway around the perimeter of each lab. Typical distribution is two copper data cables and one copper voice cable per four feet of raceway. This configuration allows for very high connection densities in the laboratory.

Specialized features of the ACES building are also enabled by the building's extensive network infrastructure capability. The Visualization Research Laboratory-a 2,900-square foot, high-performance, interactive facility-uses a 10-foot, 180° cylindrical projection screen with images generated by an SGI Onyx2 supercomputer. Students can use this lab to analyze large graphic data files in 3-D projection.

A 42-seat electronic seminar room, with remote distance learning and advanced video and teleconferencing capabilities, has power and an Ethernet port at every seat. The 196-seat Avaya Auditorium also features power and Ethernet network connections at every seat, as well as an audiovisual presentation system and distance learning capability with a Dolby digital sound system.

Ahead of the curve

All this connectivity creates an enviable situation for network users. With so much capacity, only about 20%s of the 100 Mbit network is being used.

According to Hepburn, the bottleneck is in the PC. Next-generation architecture with 64-bit processors and advanced bus speeds are needed to enable 100-Mbit links for PCs.

"We're ready for 10-Gig to the desktop once the demand is there," says Hepburn. "We're waiting for the revolution in architecture that will make it necessary to purchase the electronics that can begin to fill our network capacity."

Hepburn notes that the projected capacity of the singlemode fiber installation will easily outstretch the combined capacity of the multimode optical-fiber and copper cable infrastructure.

In the meantime, 2-k runs of singlemode fiber to the desktop utilize "odd" patches to get around network gear to decrease transmission delays for special needs. For example, to provide maximum bandwidth as soon as possible, singlemode fiber is patched from high-speed clusters in research labs (more than 12 throughout the building) to the university's backbone or to another cluster in another building.

In the future, Hepburn sees at least two scenarios to utilize the enormous capacity of the network's singlemode fiber: direct patching of connections more than 300 meters apart, and direct patching to the campus network operations center when multimode fiber would not work.

The bottom line

What's the point of having so much unused capacity? The answer is simple: tomorrow's capacity at today's prices.

The ACES building was designed to have 100 percent excess capacity. Sixteen rows and six vertical chassis-including the horizontal trays and raceways that feed offices-are currently only half in use, and can accommodate incremental upgrades or a completely new cabling network without disruption to users.

"We're not only ready for 10-Gig to the desktop, but we're ready for 100-Gig or possibly beyond," Hepburn says confidently. "This means that it's much longer before we have to buy new networking hardware."

Hepburn points out that infrastructure media is ahead of the curve right now, so it's a good time to invest. Even though the electronics to support it are just now becoming available, 10-Gig capability to the desktop is here. With no near-end crosstalk, it's a good bet that optical fiber will become the preferred transmission media of the future.

The ACES building is a model for the networking futurist.

"We've probably bought a 7 to 10-year window for ourselves before the front-end hardware completely catches up," Hepburn says.

Kevin Misteleis optical systems strategy manager at Avaya Connectivity Solutions (www.avaya.com)