The need for speed drives high-density connectivity

The right connectivity for 10-Gbit/sec transmission can put users in good position for 40- and 100-Gbit/sec transmission later.

Doug Coleman, Corning Cable Systems

Optical connectivity with OM3 and OM4 laser-optimized 50/125-micron multimode fiber has emerged as the choice media in the data center. 10GBase-SR Ethernet is becoming the primary data rate for data centers in response to server virtualization, converged networks and the need to mitigate input/output server bottleneck. Data centers are deploying OM3/OM4 connectivity solutions to meet 10-Gbit two-fiber serial transmission needs, as well as to provide for future migration to 40/100-Gbit parallel optics. High-port-count 10/40/100-Gbit electronics require utilization of high-density optical connectivity in the data center to facilitate ease of cable management, optimized pathway and space utilization as well as support green initiatives.

The need for speed

Server virtualization and converged networks drive the need for higher network data rates. Server virtualization increases utilization rates by integrating multiple applications on one server, as well as reducing the number of servers. The ability to support higher numbers of applications per server comes through technology enhancements in virtualization software and multicore processors.

Where legacy servers have one application per server with typical 15 to 20 percent utilization, virtualized servers presently have the capability to support 20 to 25 applications, which can increase utilization to 80 to 90 percent. The expectation is that virtualized servers will support 100 applications in the near future. Running 25 applications on one physical server offers material and energy-cost savings as it potentially eliminates 24 single-application servers.

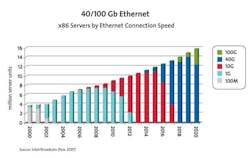

This forecast for server connection speed, supplied by Intel and Broadcom, indicates the migration from 1 to 10, then to 40 and 100 Gbit/sec transmission is upon us.

The increased number of applications per server generates the need for ≥ 10-Gbit throughput. Depending on the bandwidth requirements for a server, an eight-core processor may be able to drive tens of Gbits/sec of bandwidth. This translates into the need for a high-data-rate network infrastructure to accommodate a much higher level of server I/O performance. The expectation is that 10-Gbit will have a rapid adoption rate in the next two years at the server and at network switches, such as the core and edge switches.

Data centers use multiple networks that present operational and maintenance issues as each network requires dedicated electronics and cabling infrastructure. Ethernet and Fibre Channel are the typical networks, with Ethernet providing a local area network (LAN) between users and computing infrastructure, while Fibre Channel provides connections between servers and storage to create a storage area network (SAN). Standard activity has taken place to converge the two networks as Fibre Channel over Ethernet (FCoE).

FCoE is simply a transmission method in which the Fibre Channel frame is encapsulated into an Ethernet frame at the server. The server encapsulates Fibre Channel frames into Ethernet frames before sending them over the LAN and de-encapsulates them when FCoE frames are received.

OM3- or OM4-preferred fibers

OM3 and OM4 laser-optimized multimode fibers are the choice fiber type for connectivity in the data center. The fibers provide a significant value proposition when compared to singlemode fiber, as multimode fiber uses low-cost 850-nm transceivers for serial and parallel transmission. The IEEE 802.3ba 40/100-Gbit Ethernet standard was ratified in June 2010 and specified parallel optics transmission for multimode fiber. Parallel optic transmission is specified instead of serial transmission due to 850-nm VCSEL modulation limits at the time the guidance was developed. OM3 and OM4 are the only multimode fibers included in the standard. The 40/100-Gbit standard does not have guidance for Category-grade unshielded or foiled/screened/shielded (UTP, F/UTP, S/STP) copper cable.

High-density 12-fiber MPO trunk cables can be used for duplex serial transmission, providing a migration path to parallel optics, which require an MPO interface at the switch electronics and server interface card.

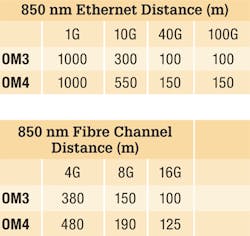

The table on this page provides the OM3- and OM4-specified distances for Ethernet and Fibre Channel. Each distance assumes 1.5 dB total connector loss with the exception of OM4 40/100G, which assumes 1.0 dB total connector loss. OM3 and OM4 are fully capable to support legacy and emerging data rates such that a 15- to 20-year service life is expected for the physical layer.

High-density optical connectivity

Network switching products are available with 48 SFP+ port line cards that use more than 1,000 OM3/OM4 fibers per chassis switch for 10G duplex fiber serial operation. Future 40/100G switches are projected to use more than 3,000 fibers per chassis where parallel optics is deployed. Network electronics high-fiber-count requirements demand high-density cable and hardware solutions to maximize use of pathway and spaces, ease cable management and simplify connections into system electronics.

Bend-optimized OM3/OM4 fiber offers significantly smaller cable diameters and hardware components that yield the highest connectivity density in the data center. Compared to traditional multimode fiber, bend-optimized OM3/OM4 fiber facilitates reduced trunk cable diameters of 15 to 30 percent and hardware patch panel densities of 4,000-plus fibers. The reduced trunk cable diameter consumes less pathway and space, and supports more efficient use of cable trays, which results in major material cost savings.

Data centers need to install high-density 12-fiber MPO trunk cables with OM3/OM4 fiber today. These can be used for duplex fiber serial transmission, providing an effective migration path to parallel optics.

Technology enhancements in virtualization software and multicore processors enable networks to support higher numbers of applications per server.

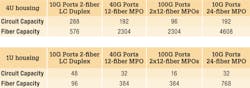

High-density modular 4U and 1U hardware patch panels easily support duplex fiber serial transmission and simplify migration to parallel optics with the use of MPO/LC modules. MPO/LC modules are used to break out the 12-fiber MPO connectors terminated on a trunk cable into simplex- or duplex-style connectors. Simplex- and duplex-style patch cords then can be used to patch into system equipment ports and crossconnect patch panels. The MPO/LC modules are easily removed and replaced with MPO adapter modules as needed to begin parallel optics transmission. 40G multimode fiber transmission will use a 12-fiber MPO, and 100G multimode fiber transmission will use a 24-fiber MPO connector at the transceiver interface.

Patch panels have integrated trays that contain the MPO/LC modules. Each tray has four discrete MPO/LC modules to enhance modularity for moves, adds and changes. 4U and 1U patch panels have 12 and 2 trays, respectively. A 4U housing is typically used to connect into high-density electronics, as well as for crossconnects. A 1U housing is typically used for trunk cables to interconnect into top-of-rack edge switches.

The MPO/LC harness assembly has become a popular method for connections into high-port-count network switches. It is shown in the photo on page 18.

Compared to typical two-fiber jumpers, harness assemblies significantly reduce the bulk of cabling into the electronics. In addition, the harness assembly can be configured with staggered, connectorized legs that match the pitch of the electronics line card. When converting to parallel optics, you would simply remove the harness assembly and replace it with the appropriate MPO jumper cable.

High-density modular patch panels support duplex fiber serial transmission and simplify migration to parallel optics with the use of MPO/LC modules.

Existing and emerging network technologies are driving the need for increased data rates and fiber use in the data center. High-density optical connectivity solutions are essential to address these trends and to provide an easy migration from duplex fiber serial transmission to 12- and 24-fiber parallel optics transmission.

Doug Coleman is manager, technology and standards with Corning Cable Systems (www.corning.com/cablesystems).

Past CIM Articles