ATM delivers voice, video and data to the desktop

Structured cabling system architecture on an ATM backbone provides bandwidth to support a buildingwide network.

Barbara Maaskant, Rollins School of Public Health, Emory University

Until recently, the Rollins School of Public Health, Emory University`s newest school, did not have a separate on-campus building; it leased two floors of office space in a building across the street from the main campus. Because a new building was on the drawing board, research was required to design a state-of-the-art network. Research uncovered a paper by John Reeves, regional manager at AT&T Distribution Technologies (Atlanta, GA), describing that company`s Systimax structured cabling system. Construction was too far along to implement many of the features of the system suggested in the paper; however, we began to rethink how the computing department`s resources could be better deployed. After several meetings with AT&T, we decided the modular approach to wiring could be integrated into our new building.

The school`s data networking needs in the old building were supported by a local area network and a suite of VMS and Unix file servers. The backbone consisted of Ethernet hardware running transmission control protocol/Internet protocol under Pathworks for DOS-based personal computers, TCP/IP for its Unix machines and Appleshare for Macintosh computers. The system was connected to Emory`s campuswide backbone via a router and provided access to other LANs within the Emory University community and also to such wide area network services as Internet and the World Wide Web.

Planning a modular system for the new building let us use our existing hardware. Each floor of the 10-story building under construction, with its classrooms, laboratories, conference rooms, offices and support facilities, will have its own Ethernet LAN. As any system designer knows, however, managing the costs associated with moving LAN users can be a problem.

In the old building, moves, adds and changes were just a minor inconvenience; however, in the new location, these might result in a major expense. On average, our moves, adds or changes cost between $150 and $400 each and could quickly consume an entire year`s operating funds of the school`s limited budget.

To minimize the effects of moves, adds and changes, we designed each office, laboratory and classroom with at least one LAN connection. In fact, for greater flexibility, we installed three outlets in each classroom--one for the instructor, one for mobile demonstration equipment and the third in the ceiling for wireless LAN interfaces. These interfaces will provide communications links via radio waves or infrared signals between the school`s LAN and students` personal communicators or computers. The two computer laboratories contain more than 25 of the 524 permanent connections planned in the new building.

In 1992, when the school`s LAN was originally planned, implementing fiber to the desk was more than we could afford--but fiber was clearly the transmission medium of the future. For the new system, we therefore decided to run fiber-optic cables to each connection.

User needs

Because most of the existing PCs on the network contained Ethernet cards interfacing the nodes to twisted-pair transmission media, we also installed copper cable for data communications. Because users will eventually want to add voice and video capabilities, we ran a second copper cable to each connection to support these services. Both copper cables were Category 5 data-grade cables to provide high-quality transmission and long-term reliability.

In addition, we installed one fiber-pair termination to each desktop to enable users to take advantage of communications speeds higher than 100 megabits per second regardless of the communications interface--155 Mbits/sec over copper to multigigabit transmission rates proposed for fiber.

A solution still had to be found, however, for increased backbone bandwidth requirements. Strained as it was, the 10-Mbit/sec Ethernet backbone in the old building provided adequate communications. With an independent Ethernet LAN on each floor in the new building, and the potential for hundreds of simultaneous users, the new backbone needed considerably more bandwidth to support a buildingwide network.

Choosing a high-performance backbone technology is difficult enough in an environment where most users require the same kind of service. At the School of Public Health, some users need to process large amounts of data in bursts and others need only to move data between nodes. The situation is further complicated by the anticipated need to handle multimedia information in real time.

Emory University uses a 100-Mbit/sec fiber distributed data interface backbone to interconnect several LANs scattered across its campus. We used an FDDI in the new building to simplify connection to other LANs on the campus as a gateway to the Internet.

Before making any critical decisions, we carefully studied our present and anticipated information and local area networking needs, because many number-crunching and modeling applications exist within the sphere of public health functions. The Division of Epidemiology, for example, conducts research projects on large populations to trace the etiology of diseases, requiring the analysis of enormous amounts of data. The Division of Biostatistics, on the other hand, performs more modeling applications; for example, calculating how long it would take to eradicate a disease with a given immunization program.

Although each user group has different needs, the common denominator, significant bandwidth requirements, nudged us in the direction of asynchronous transfer mode technology.

Through its switched interconnections, ATM provides full network bandwidth to each user, regardless of the number of other active users. Also, ATM`s bandwidth, which is presently running to 155 Mbits/sec, will be extended--622 Mbits/sec is being planned, and 2.5 gigabits per second is under discussion by the ATM Forum.

Based on research and discussions with experts in the field, we decided to abandon FDDI in favor of an ATM switch for the school`s backbone. Unlike FDDI and other token-ring LANs, in which signals travel from node to node until they reach their destination, signals on an ATM backbone travel between each node and the switch. This means virtual connections must be run between each connection in the building and the ATM switch.

A structured cabling system architecture permits such applications as text, graphics, video, voice and database server access to be delivered directly to the desktop. The choice of a structured cabling system, therefore, complemented our migration to ATM.

Wiring infrastructure

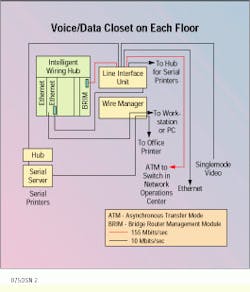

Because the new building is a multistory tower, we provided vertical and horizontal connections in a voice/data closet on each floor, aligning the closets vertically with closets on adjacent floors. Each closet contains a hub from which cabling is run through overhead cable channels to each connection on that floor.

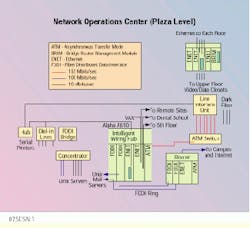

Cables running vertically from the voice/data closets connect the hub on each floor to the ATM switch. Thus, communications on each floor flow horizontally from the originating node to a hub and then vertically to the ATM switch. Communications passing through the switch flow to the network operation center hub and, via this hub, move to servers, an extended LAN connection or to the router and Emory`s campus backbone. The local hubs also provide the required signal and protocol translations between the copper-based Ethernet LANs and fiber-optic ATM backbone.

Each of the 524 connections consists of two Category 5 data and one Category 3 voice twisted-pair cables, as well as two multimode optical fibers. We installed more than 36 miles of Category 5 twisted-pair cable, with almost 18 miles of horizontal 2-fiber multimode fiber-optic cable between each connection and the corresponding concentrator. Dual vertical runs between the concentrators and the ATM switch require 2230 feet of 6-fiber multimode riser cable. The system also contains 1060 information ports, fourteen 66-port and four 48-port patch panels, and 4136 connectors and couplers. Terminations are made to flush, wall-mounted multimedia outlets designed by AT&T for the school.

On each floor, a multimedia access center hub supports 183 user connections. All of the hubs are equipped with a six-port bridge/router management module and the appropriate number of Ethernet repeater modules to support the user base of that hub. The number of Ethernet ports in each hub ranges from 21 to 63. The local Ethernet LANs connect to the ATM backbone by a 155-Mbit/sec ATM bridge/router management module.

The ATM switch connects via a 155-Mbit/sec switching hub to centralized servers and communications hardware. This hub also contains two FDDI multimode interface processors, one of which provides communications between the backbone and the school`s existing suite of Unix file servers; the other provides an interface for the ATM backbone to a router and Emory`s campuswide FDDI backbone.

Providing for growth

Although the new building now houses most of the School of Public Health, the school`s continued growth will require offices in other buildings on and off the Emory campus. Communications between these satellite offices and the new building will be provided by an Ethernet link. To accommodate this link, we added an Ethernet interface processor to provide users in those buildings with direct access to the ATM backbone.

During the past few years, the School of Public Health and the Centers for Disease Control, located adjacent to the Emory campus, have developed a working relationship. Because the CDC offers access to its computerized databases, the school has also established an Ethernet link between the CDC and the new building to provide researchers with a communications gateway to the CDC`s databases.

At present, the LANs on each floor of the new building are copper-based 10-Mbit/sec Ethernet, which provide limited capacity. Installation of optical fiber to each connection in the building facilitates any upgrades of these LANs. Just how the capacity of these LANs will be increased has not been decided. A real possibility, however, is the creation of a single fiber-based, unified ATM network that could provide each user with a dedicated bandwidth of 155 Mbits/sec (or more). Because ATM provides real-time communications, it can carry high-speed digital data, as well as voice and video communications, which will permit videoconferencing and other multimedia services.

Communications from each floor of the school flow through the network operations center hub to servers, remote sites, a local area network connection or through a router to Emory`s campus backbone.

Typical voice/data closet on each floor of the network has an intelligent wiring hub that supports 183 users. Cables run vertically from the closet to connect the hub on each floor to the ATM switch in the Network Operations Center.

Typical voice/data closet houses line interface units, wire managers and fiber-optic connection to the hubs.

Typical wall outlet has two data, one voice and two multimode fiber connections.

Barbara Maaskant is the director of information services at the Rollins School of Public Health, Emory University, Atlanta.