How data center interconnect drives demand for extreme-density cabling

By David Hessong,

The data center interconnect (DCI) application was a hot topic at the Optical Fiber Communications (OFC) conference earlier this year. Having emerged as an important and fast-growing segment in the network landscape, the space has been the focus of several exciting new product announcements. This article will explore some of the reasons for the growth of this segment and focus on several of the new product technologies aimed at making this space more installer-friendly.

Machine learning, 5G, and bigger data center campuses are all driving demand for data-center interconnect links. These deployments will challenge the industry to develop solutions that can scale effectively to allow for maximum duct use, while not being increasingly cumbersome to deploy. This article will explore these dynamics as well.

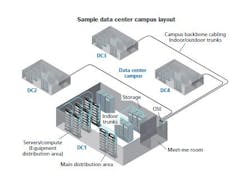

A quick internet search of large hyperscale or multi-tenant data center spending announcements returns several expansion plans, totaling into the billions of dollars. What does this kind of investment get you? Often, that answer is a data center campus consisting of several data halls in separate buildings that are often bigger than a football field, which typically have more than 100 Terabits of data flowing among them.

Without diving too far into details about why these data centers are growing so large, we can simplify the reason down to two trends. The first is the exponential east-west traffic growth being bolstered by machine-to-machine communication. The second is related to the adoption of flatter network architectures, such as spine-and-leaf or Clos networks. The goal is to have one large network fabric on the campus, which drives the need for 100 Terabits of data or greater flowing between the buildings.

As you can imagine, building on this scale introduces several unique challenges across the network, from power and cooling down to the connectivity used to network together all the pieces of equipment. In this article we’re going to focus on the best way to connect buildings on the data center campus with singlemode fiber to allow for 100 Terabits of data, or greater, flowing between them.The first question we need to answer is what is the most cost-effective technology to deliver this amount of bandwidth between buildings on a data center campus. Multiple approaches have been evaluated to deliver transmission rates at this level, but the prevalent model is to transmit at lower rates over many fibers. It is important to note here that these lengths are often 2 to 3 kilometers or shorter. Modeling shows that lower data rates over more fibers will be the most cost-effective, at least for the next few years. This cost modeling shows why the industry is investing so much money developing solutions around high-fiber-count cables and the associated hardware that is used to connect the individual buildings on the campus together.

Deployment best practices

Now that we understand the need for high-fiber-count cables, we can turn our attention to the different solutions on the market that solve this problem. These networks present new challenges in both cabling and hardware.

The industry has agreed that ribbon cables are the only feasible solution for this application space. Traditional loose-tube cables and single-fiber splicing would take much too long to install and result in splice hardware too large to be practical. For example, a 3456-fiber cable using a loose tube design would require more than 200 hours to terminate, assuming four minutes per splice. If you use a ribbon cable configuration, splicing time drops to less than 40 hours. In addition to these time savings, ribbon splice enclosures typically have four to five times the splice capacity in the same hardware footprint compared to single-fiber splice density.

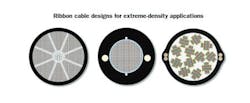

Once the industry determined that ribbon cables were the best option, it was quick to realize that traditional ribbon cable designs were not able to get the fiber density in a conduit that the market required. The industry set out to essentially double the fiber density in traditional ribbon cables.

Two design approaches emerged. The first approach uses standard matrix ribbon with more-closely packable subunits. The other approach uses standard cable designs with a central or slotted core design with loosely bonded net-design ribbons that can fold on each other.

Each of these ribbon-cable designs presents its own termination processes and challenges. Because the cables carry an outside plant (OSP) flame rating, they are required to transition to an inside plant (ISP) rated cable within 50 feet of the entrance into the building, per the National Electrical Code (NEC). This is typically done by splicing preterminated MTP/MPO or LC ribbon pigtails (cable with connectors preinstalled on one end) or stubbed hardware (hardware preloaded with pigtailed cable) in an extreme-density splice cabinet. This is where end-users are no longer just evaluating the OSP cable design, but instead a full tip-to-tip solution for these costly and labor-intensive link deployments.

Everyone involved must evaluate several areas when deciding on the best tip-to-tip solution. Time studies have shown that the most time-consuming process is ribbon identification and furcation to prepare the ribbons to route into a splice tray. Furcation refers to the process of removing the cable jacket to protect the ribbons with tubing or mesh as they route inside the hardware to a splice tray. This step becomes more time-consuming as the fiber count of the cable increases.Often, hundreds of feet of mesh or tubing are required to install and splice a single 3456-fiber link. This same time-consuming process also must be completed for ISP cables, whether they are pigtailed cables or in the form of stubbed hardware. Products on the market today vary greatly in furcation time. Some solutions incorporate routable fiber subunits on both the OSP and ISP cables, which require no furcation to bring the fibers to the splice tray, while others require multiple furcation kits to protect and route the ribbons. Cables with routable subunits are typically installed in purpose-built splice cabinets optimized with splice trays to match the fiber count of the routable subunit.

Another time-consuming task is ribbon identification and correct ordering to ensure the correct splicing. Ribbons need to be clearly labeled so they can be sorted after the cable jacket is removed, as a 3456-fiber cable contains 288 12-fiber ribbons. Standard matrix ribbons can be inkjet-printed with identifying print statements, while many net designs rely on dashes of varying lengths and numbers to help identify ribbons. This step is critical because of the magnitude of fibers that must be identified and routed. This ribbon labeling also becomes critical in terms of network repair, when cables get damaged or cut after initial installation.

Forward-looking trends

It looks like 3456-fiber cables will be just a starting point, as cable solutions with more than 5000 fibers are in development. Because conduit size is not getting bigger, the other emerging trend is to use fibers in which the coating size has been reduced from the industry-standard 250 microns to 200 microns. Fiber core and cladding sizes remain unchanged, therefore not affecting optical performance. This reduction in fiber coating size can allow hundreds or thousands of additional fibers in the same-size conduits as before.

The other trend will be rising customer demand for tip-to-tip solutions. Sticking thousands of fibers in a cable solved the problem of conduit density, but created many challenges in terms of risk and network deployment speed. Innovative solutions that help eliminate these risks and reduce deployment time will continue to mature and evolve.

David Hessong is a manager of global data center market development at Corning Inc.