With fiber’s future set in stone, innovation solves data center challenges

By David Mullsteff, Tactical Deployment Systems

Emerging technologies are placing huge demands on today’s data center infrastructure with more data than ever needing to transmit at faster speeds. At the same time, several reports show that the recent COVID-19 pandemic has increased global internet traffic by nearly 70% with virtual private network (VPN) usage in the U.S. up by more than 100% since March, and online gaming and video streaming spiking to never-before-seen levels, requiring data centers to quickly deploy additional capacity.

As the need for low-latency, high-bandwidth connectivity continues to increase, it has become clear that fiber-optic infrastructure is the frontrunner due to its virtually unlimited bandwidth capabilities. There are also new advancements in switching technology, innovative cable and connector designs, standards development and additional considerations that will ultimately make fiber the de facto choice going forward for the majority of data center links.While fiber’s future may be set in stone, key considerations when designing and deploying fiber cabling and connectivity persist. Density, ease and speed of deployment, insertion loss budgets, monitoring capabilities and flexibility are more essential now than ever. Thankfully, some fiber cable and connectivity manufacturers are responding with new innovations that are keeping pace with technology while addressing design and deployment challenges.

Fiber is the clear winner

Fiber has long been the medium of choice in backbone local area network (LAN) deployments due to its longer reach and ability to support 40- and 100-Gbit/sec speeds for aggregating and transmitting all horizontal LAN traffic to and from the main core switches. It’s no different in the data center, where backbone links between switches are typically longer and need more bandwidth to aggregate and transmit information from servers in equipment distribution areas to the core. Many enterprise data centers have migrated to speeds of 40 Gbits/sec in the backbone with 10 Gbits/sec for server connections, while large cloud and hyperscale data centers have developed 100 Gbits/sec in the backbone and 25 Gbits/sec for server connections.

Rapid advancements in both business and consumer technology are increasing the velocities and varieties of data around the world. Technologies like 5G, the Internet of Things (IoT), the Industrial IoT (IIoT), machine-to-machine communication, autonomous vehicles, content streaming, online gaming, virtual and augmented reality, and advanced data analytics are demanding increased capacity to transmit, process and store more real-time data as quickly and reliably as possible. Thankfully there have been several advancements in fiber switching technology and short-wavelength division multiplexing (SWDM) that enable the cost-effective deployment of faster speeds to keep up with the demand.The latest advanced 4-level pulse amplitude modulation (PAM4) signaling scheme delivers twice the bit rate of previous non-return-to-zero (NRZ), allowing the doubling of transmission bandwidth and keeping costs down. As a result, fiber transmission has shifted from a 10-Gbit/sec lane approach within high-speed Ethernet standards development to 25, 50, and even 100 Gbits/sec per lane. This significantly simplifies the deployment of 50-, 100-, 200-, and even 400-Gbit/sec fiber applications. In fact, 100 Gbits/sec is now virtually equal in cost to 40 Gbits/sec, which was based on NRZ 10-Gbit/sec-per-lane technology. Similar to wavelength division multiplexing (WDM) technology that has been used with singlemode fiber for several years, SWDM now also enables the ability to transmit signals over multiple wavelengths on OM5 multimode fiber. These technologies, combined with overall lower fiber transceiver costs, will drive continued growth of fiber in data centers of all sizes.

Based on PAM4 technology, we now have more duplex data center fiber applications to choose from for 25, 50, and 100 Gbits/sec, including the following current and upcoming IEEE Ethernet standards.

· 25GBase-SR over duplex OM4 multimode fiber up to 100 meters

· 25GBase-LR over duplex singlemode fiber up to 10 kilometers

· 50GBase-SR over duplex OM4 multimode fiber up to 100 meters

· 50GBase-FR over duplex singlemode fiber up to 2 kilometers

· 50GBase-LR over duplex singlemode fiber up to 10 kilometers

· 100GBase-FR1 over duplex singlemode fiber for up to 2 kilometers (in development)

· 100GBase-LR1 over duplex singlemode fiber for distances up to 10 kilometers (in development)

· 100GBase-DR over duplex singlemode fiber for distances up to 500 meters

In large cloud and hyperscale data centers, where 50 and 100 Gbits/sec are already being deployed for server connections, 200- and 400-Gbit/sec parallel-optic or WDM applications are making their way into the backbone. In the enterprise data center, we are now seeing 100-Gbit/sec backbone links as the new norm as switch-to-server connections migrate from 10 to 25 Gbits/sec. With the enterprise environment expected to follow the hyperscale trend, it won’t be long before we see 200 Gbits/sec and 50 Gbits/sec for enterprise backbone and horizontal links eventually taking hold.

While there are options for twinax direct attach cables like SFP28 and QSFP28 assemblies to support 50- and 100-Gbit/sec server connections, these solutions are limited to very short (3- to 5-meter) top-of-rack deployments where switches in the cabinet connect directly to servers within the same cabinet. To minimize latency and maximize port utilization and manageability, larger data centers are trending towards deploying fewer switch tiers and placing higher-density switches in an end-of-row location where they can connect to multiple servers via fiber patch panels and trunks rather than direct attach options, further increasing the amount of fiber in the data center and the need for innovative fiber patching solutions.Looking even farther into the future, network speeds are moving to 400, 800, 1600 and even 3200 Gbits/sec. Recent announcements include the 400G BiDi MSA Group releasing the first multimode optical fiber transceiver specification (400G-BD4.2 Specification). Additionally, Deutsche Telekom Global Carrier announced they are implementing an 800-Gbit/sec link between Austrian data centers using Ciena’s WaveLogic 5 Extreme (WL5e) coherent transmission engine. These current release announcements and future design efforts are using multiple wavelengths over the optical glass and are requiring rapid deployment of new improved fiber, new and smaller fiber connectors and new easily deployed fiber-optic patching solutiosn.

Density always at play

Space has always been at a premium in the data center. With more fiber links and advanced high-density switches, getting more connections in less space is now more important than ever. The most popular method for connecting equipment in the data center is to deploy fiber patch panels at the equipment locations that connect to equipment ports at the front via fiber jumpers. The panels then connect to each other via preterminated plug-and-play trunk cables at the rear.

For 10GBase applications, most traditional preterminated plug-and-play systems use 12-fiber cassettes mounted within a fiber panel that features 6 duplex connector ports (e.g. LC connectors) for equipment connections at the front of the cassette and multi-fiber push-on (MPO) style connectors (e.g. MTP® connectors) at the rear of the cassette. The rear MPO port is then connected to other cabinet and rack locations via a multifiber trunk cable.For parallel-optic applications with multifiber MPO connectivity, plug-and-play systems use cassettes with feedthrough adapters mounted in the panel. These include the latest high-speed applications such as 100GBase-SR10 that transmits and receives using 20 fibers (10 transmitting and 10 receiving at 10 Gbits/sec) via MPO-24 or 2 MPO-12 connectors and 40GBase-SR4, 100GBase-SR4, 200GBase-SR4, 400GBase-SR4 or 400GBase-SR4.2 that transmit and receive using 8 fibers (4 transmitting and 4 receiving at 25, 50 or 100 Gbits/sec) using MPO-8 or MPO-12 connectors. (Note that when using MPO-12 connectors for Base-8 applications, the middle four fibers of the connector are not used.) Parallel-optic applications also include 400GBase-SR16 that transmits and receives using 32 fibers (16 transmitting and 16 receiving at 25 Gbits/sec) and 400GBase-SR8 that transmits and receives using 16 fibers (8 transmitting and 8 receiving at 50 Gbits/sec). These applications typically use MPO-16 or MPO-32 connectors.

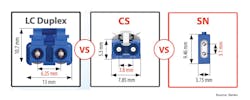

While LC connectors remain the primary interface for duplex fiber applications, the demand for greater density has led to the recent development of new small-form-factor duplex connector designs. Some of these include the Senko CS® dual fiber connector introduced in 2019, which is 40% smaller than the LC connector, and the latest Senko SN® and USCONEC EliMENT™ MDC dual fiber connectors, which are even smaller for significantly increased density. These small-form-factor connectors use the same 1.25-mm ferrule as the LC, and are ideal for ultra-high-density patching environments, enabling 4 connectors (8 fibers) to fit into one transceiver to support 400-to-100-Gbit/sec or 200-to-50-Gbit/sec breakout applications that are becoming popular in large cloud and hyperscale deployments for server connections.

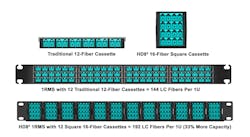

When selecting fiber patching solutions, savvy data center managers select solutions that optimize density and support the latest connector interfaces. Cassettes and adapters used with plug-and-play systems have historically been wide and flat, which has limited the number of connections that can fit in a 1U panel. Recent innovations like Tactical Deployment Systems’ HD82™ use reduced-footprint square cassettes to accommodate more connectors than traditional wide flat cassettes. For example, solutions that use wide flat cassettes typically only support 72 LC duplex connectors (144 fibers) in a 1U space. In contrast, the use of square cassettes with 8 duplex LC fiber connectors per cassette and 12 cassettes in a 1U space support 96 duplex connections (192 fibers) in a 1U space, delivering a 33% increase in capacity.

Using the latest small-form-factor CS, SN and ELiMENT MDC connectors, even higher densities can be achieved.

Ease and speed more critical than ever

With more applications and data moving to the cloud and more enterprises businesses outsourcing a range of IT functions to managed service providers, there is an increased focus on speed to market and the ability to easily and quickly deploy data center capacity. Cloud and managed service providers are highly focused on deployment speed to meet service level agreements (SLAs), respond immediately to changing conditions and reduce the time for customers to go live with new apps and services. In other words, the faster and more responsive these providers can get new equipment and services online, the faster revenue will start flowing for both data centers and customers alike. Deployment speed is also a focus for enterprise businesses deploying their own capacity—speeding the process helps them maintain a competitive edge within their specific market.Speed to market isn’t the only variable demanding fast deployment. Nowhere is speed of deployment more critical than disaster recovery and emergency response scenarios. While the recent COVID-19 pandemic and stay-at-home orders has caused a massive increase in internet traffic with Netflix, Amazon and YouTube all needing to quickly respond by adding bandwidth, many enterprise data centers have also had to quickly respond by adding additional VPN server capacities, especially within hospitals and healthcare facilities that have had to rapidly increase bandwidth and server capacity to connect mobile facilities and fast-track telemedicine.

While traditional plug-and-play fiber systems have long been touted for speed of deployment, and they do indeed offer faster installation compared to fusion splicing and field termination, they are not necessarily as fast to install as they could be. First, lead times are often longer than necessary, and once the components do arrive, the individual cassettes and/or adapters need to be removed from their packaging and mounted into a fiber panel. The MPO trunk cables then need to be routed through pathways, the pulling eye removed and the cables plugged into the back of the cassettes or adapters. Removal of pulling eyes is a time-consuming task as it requires cutting off the leading end, cutting and remove of the sleeve and trimming off any extra yarn. And as we all know time is money.

Most plug-and-play cassettes and adapters also do not lock into place within a panel and tend to come loose when plugging in patch cables at the front and trunk cables into the rear. This has frustrated many a technician, requiring them to use one hand to hold the cassette or module in place during installation.One solution to the above-mentioned challenges is the HD82 High Density Fiber Solution. Rather than using separate cassette modules and trunks, the cassettes are part of the trunk itself. This offers an easier, faster deployment. These cassette-based trunks can be easily pulled through pathways and then snapped into place within a chassis, eliminating the need to install and plug together separate components, remove pulling eyes and deal with loose cassettes.

To enable this design, the cassettes cannot be the traditional wide, flat design as these are too large for routing through patwhays. The square HD82 cassette design offers greater density while being small enough to easily route as part of the trunk cable. The cassette also features a snap-on protective cap that doubles as an easily removable pulling eye once the cassette is snapped into place.

To support a design where the trunk cable and cassette are a single component, fiber terminates directly to the connectors within the cassette. To ensure reliability, these types of cassettes must include proper strain relief. With the HD82 solution, for example, the cable’s Kevlar and internal strength members are separated from the fiber inside the cassette and connected to an integrated strain relief, supporting about a 50-pound pull force while placing no strain on the fibers themselves.

Insertion loss budgets remain tight

Insertion loss is the primary performance parameter for fiber-optic networks. Expressed in decibels (dB), this loss of signal is a natural occurrence along any length of fiber, and every connection point within the channel. Fiber applications have specific maximum insertion loss requirements to maintain proper signal transmission. If the loss is too high, it will prevent the signal from properly reaching the receive end, resulting in network errors and non-functioning links. Loss budgets are an increasing concern in recent years with higher-speed applications that have more stringent loss requirements. This remains an issue with new high-speed 50- and 100-Gbit/sec applications, especially for longer-distance deployments or those with additional connections in the channel.

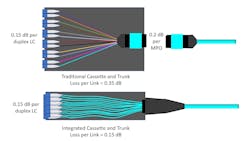

Wit duplex plug-and-play fiber solutions, traditional cassettes that use an MPO connector at the rear and duplex fiber connections on the front have a total insertion loss that includes both connections. In other words, the overall insertion loss per channel is the sum of all connection points (e.g. if the MPO connector has an insertion loss of 0.2 dB and the LC connector has a 0.15-dB loss, then the total loss is 0.35 dB). While a loss of 0.35 dB is not a huge concern in 10-Gbit/sec duplex multimode fiber applications like 10GBase-SR with a maximum allowed channel loss of 2.6 dB, it is a concern in recently developed high-speed duplex applications. New duplex multimode applications like 50GBase-SR have a total channel insertion loss of just 1.9 dB for OM4 fiber. For duplex singlemode applications, maximum channel insertion loss has decreased from 9.4 dB for 10GBase-LR to just 4.0 dB for 50GBase-FR and 3.0 dB for 100GBase-DR.The increasingly more stringent channel loss requirements coupled with the use of traditional MPO/LC cassettes can limit the number of connections in a channel and prevent the use of crossconnects at both ends of a channel that some data center operators prefer for superior flexibility and manageability. For example, assuming a typical fiber attenuation of 3.0 dB/km, the number of 0.35-dB MPO-to-LC cassettes in a 50GBase-SR deployment would be limited to 4, with very little headroom.

Rather than using connector-to-connector based cassette, integrating the cassette as part of the trunk cable itself not only speeds and eases deployment, it also eliminates a connection point. With the multi-fiber cable coming into the rear of the cassette and directly terminated to the duplex ports at the front, the extra 0.2 dB of the MPO connection is eliminated. With just a 0.15-dB insertion loss (0.1-dB typical) for the duplex port at the front of the cassette, this type of cassette-based trunk solution allows for doubling the number of cassettes in a channel, enabling more connection points and/or providing more insertion-loss headroom within a channel for greater flexibility and manageability.

Network monitoring requirements

As the number of fiber links, variety of applications, strict reliability requirements and overall network complexity increase, so does the need to balance uptime with maintaining network integrity and security. As a result, the demand for network monitoring is at an all-time high. A recent report from Markets and Markets states that the overall network monitoring market is anticipated to grow at a rate of more than 9.9%, reaching $2.93 billion by 2023, up from $1.67 billion in 2017.

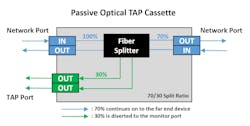

The easiest way to monitor fiber network traffic is with the use of passive traffic analysis point (TAP) solutions. These passive devices integrate an optical splitter to split the data stream into two paths—one that passes the information on to its original location and the other that transmits copied information to the monitoring port. Because they are passive and essentially invisible to the network, TAP cassettes have no impact on data transmission and require no programming or switch configuration.When it comes to selecting a TAP solution, there are some key considerations. TAP cassettes that integrate into existing fiber patching solutions eliminate the need for additional rack space and the extra connections required with standalone TAP devices. Cassettes with a variety of port counts and those that feature both monitoring and network ports within the same cassette offer greater flexibility and can eliminate having more monitoring ports than necessary.

The split ratio is yet another consideration with TAPs. Lower-speed networks up to 10 Gbits/sec typically have a split ratio of 70/30 where 70% of the signal is allocated for the network and 30% is allocated for monitoring work. This introduces less loss for the network. However, for 25 Gbits/sec and higher, it’s better to use an equal 50/50 split ratio because with a 70/30, the loss could be too high for the monitored traffic. Data center managers with the need to monitor traffic on both low- and high-speed links should consider TAP cassettes from vendors that offer multiple split ratios.

More to think about

While technology advancements and the need for bandwidth ensure that fiber’s future is set in stone within the data center, challenges surrounding density, speed of deployment, flexibility, performance and monitoring capabilities remain and are even more of a consideration than ever before. Thankfully, amid new PAM4-based 25-, 50-, and 100-Gbit/sec duplex fiber applications, there are new innovations such as square cassette-based trunks that not only deliver higher densities in a 1U space, but also provide fast and easy installation and lower insertion loss.However, there is even more to consider. With cloud data center and hyperscale space deployment configurations varying widely, the ability to customize solutions and select from a wide range of connector types is a key consideration. Data center owners and operators would also be wise to choose a trusted fiber solution provider that will continue to evolve and support emerging and future connector designs while using the highest-quality components such as premium-grade Senko and USCONEC connectors.

At the same time, data center administrators need to ensure that they are partnering with solution providers who can offer quick-turn manufacturing for reduced lead times. Selecting fiber components that are made in the U.S.A. is therefore imperative, especially amid the recent COVID-19 pandemic that has significantly slowed down, and in some instances halted, the international supply chain and prevented components coming from offshore providers. In fact, under the Coronavirus Aid, Relief and Economic Security Act (CARES Act), Small Business Administration (SBA) loans require that to “whatever extent feasible, loan recipients will purchase only American-made equipment and products.” Furthermore, offshore suppliers of fiber connectivity have been shown to have subpar quality and mechanical reliability. No matter how quickly and easily fiber connections can be deployed in the data center, it simply doesn’t matter if the quality is not there to reliably support ongoing high-speed data transmission and ensure maximum uptime.

Editor’s note: CS® and SN® are trademarks of Senko. MTP® and ELiMENT™ are trademarks of US CONEC Ltd.

David Mullsteff is chief executive officer and owner of Tactical Deployment Systems, which he founded to deliver fiber-optic solutions focusing on quick-turn manufacturing and new product line development. He also is chief executive officer and owner of Cables Plus, and has more than 30 years’ experience in the data communications industry as a corporate strategist, marketer and business-development professional.