Inside the EPA’s energy efficiency report

Report to Congress details technologies and their impact and implementation in the data center.

by Betsy Ziobron

August 2007’s “EPA Report to Congress on Server and Data Center Energy Efficiency” (available at www.energystar.com), relates green initiatives and implications for the cabling industry. While its notable findings and industry reaction have been well publicized, the130-page document covers much more than just data center consumption statistics, growth trends, andpotential energy-efficient scenarios.

The Environmental Protection Agency’s report details current energy-efficient technologies and methods, their impact on the existing power-grid and data-center reliability, and the barriers, incentives, and recommendations surrounding their adoption.

Technologies to consider

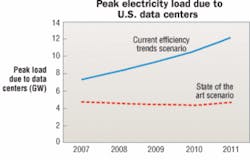

According to the report, the energy used by the nation’s servers and data centers in 2006 is estimated at 61 billion kilowatt-hours (kWh), which is 1.5% of total U.S. consumption and more than double the amount of electricity consumed for this purpose in 2000. Using estimation methods based on the best publicly available data, the report estimates that, under current efficiency trends, the energy consumption of servers and data centers will nearly double by 2011.

Potential energy savings can be gained by using the following current and future technologies outlined in the report:

Advanced microprocessors—The shift to new low-voltage processors that contain two or more processing cores on a single die offers a combination of increased performance and reduced energy consumption. Dy-namic frequency and voltage scaling is another energy-reducing feature, allowing microprocessor voltage to ramp up or down based on computational demand. New server microprocessors designed to facilitate virtualization are also helping data center managers replace several dedicated servers for energy savings as well as operational efficiency.

Energy-efficient servers—Many servermanufacturers are offering more energy-efficient servers that not only include the abovementioned microprocessor features but also employ high-efficiencypower supplies and internal variable-speed fans for on-demand cooling. The EPA estimates that, on average, energy-efficientservers will consume approxi-mately 25% less energy thanother similar server models.

Innovative storage devices—A shift to smaller form-factor disk drives and increased use of serialadvanced technology attachment drives make for significantly more efficient storage devices that, according to the EPA report, will decrease power use by approximately 7% by 2010.Improved storage-management practices, such as storage virtualization, data de-duplication, storage tiering, and the powering down of archival storagedevices not in use, will also go a long way in providing energy savings. Emerging solid-state flash memorydevices may also provide more energy-efficient storage in the data center.

Improved site infrastructure systems—In additionto low-cost measures, such as the use of a hotaisle/cold aisle configuration that is now a common practice, innovative power and cooling systems are important toenergy savings because they account for more than 50% ofthe total data center consumption. Upgrading to moreefficient UPS systems and water-cooled chillers with variablespeed fans and pumps can provide further energy savings.

Distributed generation and combined heat and power—When distributed generation (DG) technologies, such as fuel cells,microturbines, gas turbines, and reciprocating engines are used in combined heat and power (CHP) systems that use waste heat to provide cooling, the energy savings are astronomical. According to the report, these systems can have payback that range from less than 5 years to about 10 years, depending on the technology. Additionally, the use of DG and CHP in data centers can provide increasedreliability, decreased risk oroutages, and easier future expansion by avoiding the need for utility infrastructure upgrades. These systems also reduce greenhouse emissions.

“The key takeaway from the EPA report is that there are many strategies that can be imple-mented in different areas of the data center, and every option described refers to a platform that data center managers should be looking into.” says Carl Cottuli, vice president of APC’s Data Center Science Center (www.apc.com).

Effects to observe

Chapters 3 and 4 of the EPA report outline the impact ofdata centers on the power grid, and analyze the potential cost and energy savings associated with the technologies previously described.

“The data center is an enormous concentration of powerper square foot, and it’s not unusual for a data centerthat takes up only 15% a facility’s square footage to accountfor half of the entire facility’s power consumption,” saysCottuli. “If data centers managers walk away with theunderstanding that the adoption methodologies outlined inthe EPA report can have a significant impact on the utility bill, then the report has done its job.”

According to the EPA report, a “state-of-the-art” scenarioin which all U.S. servers and data centers operate at maximum energy efficiency using the technologies and practices outlined above will yield up to 80% improvement in energy efficiency. In contrast, the “improved operation” scenario that includes current operational energy-efficient trends with little or no capital investment will yield only 30% improvement (see “Energy consumption an overriding issue,” Cabling Installation & Mainentance, November 2007, page 33).

To estimate the impact of the projected energy savings on the utility grid and generating capacity, the report also translates the savings into peak power demand using theNational Energy Modeling System that provides a baseline forecast of the U.S. energy sector. According to the report, the “state-of-the-art” scenario could reduce peak load from data centers over the next five years, which avoids about 600 megawatts of new power-plant capacity.

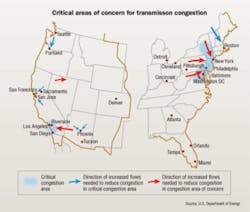

While these savings are significant, the report also states that the impact of data centers on the electrical transmission and distribution grids relies heavily on location. While comprehensive statistics on data center location are not available due mainly to security and confidentiality, it is assumed that the majority of data centers are|located in metropolitan areas. The movement of large data centers to more rural areas due to lower land and construction costs will have relatively less impact on the power grid, however, the report warns that the building of non-urban data centers in clusters could contribute to new areas of grid congestion in the future.

According to the report, more efficient servers and technologies will continue to focus primarilyon performance with small, infrequent, or irrelevant latencies. Overall, the equipment will not be less functional, and while some manufacturers may offer models with fewer features as a way to reduce power consumption, only customers who don’t require the omitted features will purchase them.

While some of the methods and technologies will not increase cost, the report states those that do will typically only be implemented when the savings are considerably larger than the cost increase. In general, the potential cost increases are expectedto be much less than the value of lifetime energysavings and avoid site-infrastructure costs, pro-viding a lower total cost of ownership.

Barriers to overcome

The EPA report also addresses key factors thatimpede adoption of energy-efficient technologiesand methods, many of which are the same barriersthat have previously limited adoption of newtechnologies in the industry.

Not surprisingly, cost is the number-one barrier,and many of the report’s suggested efficiencyimprovements are expensive. But money spent on data center upgrades and implementation is more tangible than money saved on future energy costs, and many vendors are reluctant to sell more expensive products over less expensive options that provide the same function.

“We embrace a pay-as-you-go methodology where you maintain an efficiency of 60 to 80%, and when you’re operating at the higher 80% efficiency, you add more and start back at 60% again,” says Cottuli. “If you do this over the life of the datacenter, the cost is spread out over time.”

Another barrier to implementation is data center managers’ lack of knowledge and responsibility concerning energy costs and a company’s utility bill. Those responsible for paying the bill are typically different from those responsible for capital investment, and many data centers are not sole tenants of a given building, or their equipment is being hosted by a separate facility.

“We believe it’s vital for data center managers to see andunderstand their utility bill, and that is starting to happen,” says Cottuli. “As data center managers are barred from rolling out high-density computing due to the impact on power and cooling, and as consolidation and server virtualization is adopted, we’re seeing a need for the two entities to come together. In 2008, new efforts surrounding green initiatives and improved operational expense will put these two groups at the same table.”

Reliability and uptime have always been the utmost concern among data center managers, resulting in the use ofredundant energy loads and reluctance to adopt new (perhaps unproven) technologies. The key is to ensure that redundancyis achieved in the most efficient way possible while educating the industry about potential energy-saving technologies and practices that do not impact reliability.

“There’s always been the fallacy that green efforts in thedata center mean lower reliability, and it’s simply not true,”says Cottuli. “Operating six cooling units at a higher percentage of their capability is far more efficient than running 10 at just 10%. Some believe that shutting down four units will eliminate redundancy. Yes, it’s possible to shut down the wrong four units, but smart implementation of green efforts not only improves energy efficiency but reliability as well.”

The report includes several noteworthy policy recommendations to overcome these barriers, including:

- Standardized energy performance measurement;

- Private-sector challenge from the federal government to corporate executives;

- Data center metering of energy use and consumption;

- Energy Star specifications for data center products;

- Modified federal procurement specifications to requireenergy-efficient products;

- Financial and tax incentives to encourage adoption;

- Demonstrations and awareness campaigns to improve education.

Starting now

With help from industry organizations like The Green Grid (www.thegreengrid.org), a non-profit consortium dedi-cated to developing standards and measurement methodsfor data center energy efficient performance, Cottuli isconfident adoption of standards will begin this year.

“In order to determine if you are saving energy, you need to first have a baseline on what your data center is consuming today,” says Cottuli. “The industry needs to get behind one measurement standard and then push it. Every technology and recommendation in the EPA report relies on the fact that we know what the energy efficiency is today and can measure improvements tomorrow.”

BETSY ZIOBRON is a freelance writer and regular contributor to Cabling Installation & Maintenance. She can be reached at: [email protected]

Energy-saving practices to deploy today

While some of the technologies and methods described in the EPA report may seem too costly, complex, or risky to implement, and others are waiting for a standardized measurement, many viable opportunities exist for improving data center energy efficiency today.

“For years, data center managers have looked at the specifications of products to determine power and cooling, but those specifications areoften worst-case scenarios,” says Carl Cottuli, VP of APC’s Data CenterScience Center (www.apc.com). “So, all the equipment you purchased was based on a false number to being with, resulting in oversized systems and products. You also have to consider the energy and raw materialsthat went into manufacturing and transporting those products.”

Cottuli adds, “It’s important to look at consumption load and drive these systems dynamically. By understanding what the real consumption model is, data center managers can have the same protection but do it cheaper.”

Cottuli points out that high-efficiency power supplies and cooling systems are available now, and reiterates the ability for data center managers to pay as they go. “We’ve been developing for energy savings for quite some time with high-efficiency cooling systems and UPS,” he says. “For example, row- or rack-based cooling systems are now available for providing premium localized cooling for higher-density applications while lower-energy room-based cooling is maintained for low-density applications.”

Eliminating unmanaged change is another opportunity that data center managers can implement today. “Because data centers are complex and integral to business, fear reigns supreme,” says Cottuli. “When new equipment is brought online, old hardware is often left in place. Data center personnel get used to walking by the unused equipment, and eventually they refuse to touch it for fear that it still supports something or may be needed. Managed change allows you to deploy the right equipment in the right locations and discontinue using old equipment on a schedule.”

According to Cottuli, unmanaged change can also lead to strandedcapacity. “In a 5,000 square-foot data center with 1,000 square feet of premium cooling for high-density equipment, unmanaged change can cause someone to install low-density equipment in that premium space,” he explains. “Now, you don’t have room for additional high-density equipment, and you’re not optimizing your cooling or energy use.”—BZ