Cabling in the data center: Just a piece of the puzzle

The dense, bandwidth-intensive environment creates significant demands on all forms of infrastructure.

The emergence of data centers as a bountiful market could really be dubbed a re-emergence. In the bubble days of the late 1990s, “Internet hotels,” as they often were called, abounded. These collocation facilities were businesses in and of themselves, housing networking gear for well-conceived enterprises as well as the many that had awful business plans but plentiful venture capital money. When there proved to be far more of the latter than the former, these Internet hotels became the late-20th-Century version of ghost towns, devoid of activity.

To say it took an act of Congress to get data centers back to a place of prominence within the networking industry is not entirely an exaggeration. In fact, some say it took two such acts. “HIPAA and Sarbanes-Oxley are why the data center market has exploded,” comments Benoit Chevarie, product line manager with Belden (www.belden.com), referring respectively to the 1996 and 2002 pieces of legislation that greatly affected the amount of electronic data stored in these environments, and the manner in which that data is stored. (See sidebar for details on each piece of legislation, page 38.)

Multiple drivers

Chevarie points out that while HIPAA and Sarbanes-Oxley are the two most significant drivers of the data-center market, they are not the only ones. “Networks really are becoming the center of business activity,” he notes, “from transactions within the finance and banking industry, to all forms of electronic commerce.” Speed, reliability, and security all carry significant weight in such activities.

“C-level executives could go to jail if their companies’ accounting and security measures do not meet Sarbanes-Oxley,” explains Zurica DSouza, product information manager, data center infrastructure systems with APC (www.apcc.com). Part of compliance, and hence a crucial requirement for data centers, is ensuring availability of information. “Once lost, information is difficult to recover and nearly impossible to duplicate,” she says. “That is causing data center users to look at all elements of their physical infrastructure much more closely and with keener eyes.”

Alan Ugolini, a data center specialist with Corning Cable Systems (www.corningcablesystems.com), echoes the multiple-driver sentiment. “Some drivers are regulatory. For example, Sarbanes-Oxley will dictate much of what organizations like Bank of America or JP Morgan Chase do in their data centers. At the same time, a health-care-related company like Blue Cross/Blue Shield will have to meet HIPAA requirements, and will do so in its data center.”

“At the same time,” Ugolini adds, “every company is facing pressure to do business better. Many are implementing SAP for enterprise resource planning and customer-relationship management.” Furthermore, he adds, some companies deploy backup data centers for redundancy and security purposes.

All these factors add up to a data-center market that has grown significantly in the past several years, and shows every sign of continued growth into the future.

Storing and sending data

The regulations within HIPAA and Sarbanes-Oxley that drive data-center activity concern maintaining electronic records, whether they are financial or medical in nature. Consequently, in most cases, the primary activity in a data center is the storage of data. So, while LANs are the entities of utmost concern in office-building environments, storage area networks (SANs) take on great importance in the data center.

“They are the two sides of the house,” says Corning Cable Systems’ Ugolini. “Typically, you find Ethernet deployed to run IP [Internet Protocol] in the LAN and Fibre Channel to handle storage in the SAN.”

And typically, both the LAN and the SAN in the data center must be robust. “Huge chunks of data must be transmitted, and that requirement goes hand-in-hand with the need for availability,” says DSouza.

While Ethernet has followed a traditional 10× upgrade path from 10 to 100 to 1,000 to 10,000 Mbits/sec, Fibre Channel follows a roadmap that has doubled speeds, from 1- to 2- to 4-Gbits/sec.

“On the LAN side, server NICs [network interface cards] used to be 10/100 Mbits/sec,” Ugolini notes. “Now, gig-speed NICs are common, and users’ backbones have to increase in speed because they don’t want to oversubscribe. 10-Gig will start to become dominant.” He further explains that on the SAN side, 1-, 2-, and 4-Gbit/sec Fibre Channel are used frequently inside data centers. A 10-Gbit/sec Fibre Channel protocol does exist, though most often it is used to connect multiple buildings in a campus or metropolitan environment.

Belden’s Chevarie states that, as the protocol’s name indicates, Fibre Channel deployments typically incorporate optical connections. “SANs use a lot of fiber,” he says. “The equipment has a fiber input built-in.”

“SAN equipment is getting dense as fiber transceivers get dense,” Ugolini adds. “Some cabinets contain two pieces of equipment, each of which has 512 ports.”

Keeping it cool

Such density has several implications for the data center, the most significant of which is heat. Keeping data-center equipment and the center itself cool is a never-ending challenge for data-center managers and a primary focus for many of the vendors that sell products into this market.

“If you stacked up the issues that are important in a data center, cooling would be on top,” Ugolini says. He points out that while reliability certainly is a major concern, data-center managers stay on top of their equipment and infrastructure systems, so those elements infrequently fail. Heat, however, is a culprit that can undermine otherwise trustworthy equipment and infrastructure, so reliability is a concern insomuch as it is a victim of an overheated data center.

The hot-aisle/cool-aisle topology put forth years ago by The Uptime Institute (www.upsite.com) and adopted in the TIA-942 standard for structured cabling systems in the data center is employed almost universally. Yet, while that layout does only good things for thermal control, it also does only so much. Nearly every product and technology that is designed for a data center environment must consider or in some way address the issue of heat generation and thermal control. (The overall topic of data center heat and cooling issues will be covered in detail over several issues of this magazine later this year.)

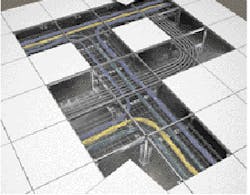

Where does structured cabling fit in as an element of the data center and a consideration in overall thermal management? In short, cabling cannot help the situation but could adversely affect it if not managed properly. “When running cables underneath a raised floor, make sure they are underneath the hot aisle,” says Chevarie. He adds that everyday good cable management within cabinets can also help ensure that the cabling does not become a detriment to keeping the environment cool.

“Poor choices can hurt,” adds Ugolini. “Bulky cables can create dams that block airflow, so a good move is to choose a cable that does not take up a lot of area in the cabinet.” He adds that a well-thought-out cabling design can prevent the cabling system from becoming an airflow problem. “When you run cables underfloor, reduce the necessity to pop tiles every time you have to make a change. Popping a tile changes the air pressure inside the room and constantly popping tiles will hurt the cooling efforts. Having the right architecture can mean a lot.”

For all the efforts that should be made to ensure the cabling system is not a hindrance to the cooling process, the fact is most data centers still will use significant amounts of energy on mechanical cooling systems. Couple that with the energy spent to power all the networking devices that are the reason for the center’s existence, and data centers are massive energy consumers.

Location, location, location

For that reason, energy costs are a significant factor in determining where, geographically, data centers are located. Whether because of governmental regulation, sound business practice, or some combination of both, many businesses have established remote data centers in some location distant from the business’ primary center of operations. “Many remote data centers represent a mirror image of what companies have at their main locations,” explains Chevarie.

“They are established as disaster-recovery sites,” adds DSouza, “to provide backup, whether the disaster faced is natural or planned. And they provide the ability to source information from a remote location. Many of our customers are headquartered in major metropolitan areas, where real estate is expensive and the cost of energy is expensive. Among their objectives for their data centers at these primary locations is to optimize as many elements as possible to lower total cost of ownership.”

When these companies must establish remote data centers, they are not bound to the economic pressures of their headquarter locations. So, most often, they seek out areas of the country where real estate and energy are, comparatively speaking, readily available and inexpensive.

“The Midwest has become a popular region because of its real-estate and power prices,” comments Ugolini.

Chevarie says that the availability of professional expertise is also a consideration. “Colorado is home to a number of remote data centers today,” he explains. “In the late 1990s it was a center of activity for many CLECs [competitive local exchange carriers].” Some of the professionals who administered those plants back then, he says, now manage remote data centers in the same region.

Ugolini notes that outsourcing is making a comeback in some pockets as well, reminiscent of the days of the Internet hotels. “They were fiber-rich environments,” he points out, “often referred to as the ‘fiber highway.’ A lot of enterprise companies today require so much data storage today they could make use of that kind of capacity.”PATRICK McLAUGHLIN is chief editor of Cabling Installation & Maintenance.

HIPAA and Sarbanes-Oxley

The Health Insurance Portability and Accountability Act of 1996 (HIPAA) includes four sections that require the Secretary of the United States Department of Health and Human Services to publicize standards for the electronic exchange, privacy, and security of health information. These sections are known as the Administrative Simplification rules. HIPAA’s Privacy Rule, in addition to the Administrative Simplification rules, apply to health plans, health-care clearinghouses, and to any health-care provider that transmits health information in electronic form.

These organizations must maintain their privacy policies and procedures, privacy practices notices, disposition of complaints, and other actions, activities, and designations that the Privacy Rule requires to be documented, for six years after the later of the date of their creation or last effective date.

The Sarbanes-Oxley Act of 2002 originally stated that any accountant who conducts an audit of a company falling under the auspices of the law shall maintain all audit or review workpapers for a period of five years from the end of the fiscal period in which the audit or review was concluded.

In March 2003, the Securities and Exchange Commission amended the regulation to require accountants who audit or review an issuer’s financial statements to retain certain records relevant to that audit or review for seven years after the auditor concludes the audit or review. Additionally, the rule addresses the retention of records related to the audits and reviews of not only issuers’ financial statements but also the financial statements of registered investment companies.

Sources: U.S. Department of Health and Human Services, U.S. Securities and Exchange Commission