As 400G comes to the cloud, multimode fiber provides the path

Forthcoming standards for 400-Gbit/sec Ethernet will operate over 4- and 8-fiber-pair constructions of multimode fiber, leveraging PAM-4 signaling.

By John Kamino

The rise of cloud-based services continues to change the design of data centers and how data center traffic is transmitted, both internally and between data centers. As video-rich social media and other content-sharing Web 2.0 applications push consumer demand for speed and capacity, enterprises are also migrating to public cloud services offered by hyperscale vendors or building private clouds that provide owned (rather than leased) facilities for its users, using the same type of architecture as the hyperscaleproviders.

These architectures are driving demand for data rates beyond 100 gigabits per second (Gbits/sec), moving to high-speed and low-power 400-Gbit/sec interconnects. The optical fiber industry is responding by developing two new IEEE 400-Gbit/sec Ethernet standards, called 400GBase-SR4.2 and 400GBase-SR8, to support the short-reach application space inside the data center. Both use PAM-4 signaling to provide for higher transmission speeds over multimode fiber using vertical cavity surface emitting lasers (VCSELs), which historically had power and cost advantages over other media options for these short-reachapplications.

The growing demand for speed and capacity is being driven by enterprises migrating to public cloud services offered by hyperscale vendors, or building private clouds that provide owned rather than leased facilities for its users. Photo: iStock

Major providers pave the way

Webscale data centers are expected to continue growing at a rapid pace as applications ranging from social networking and email to retail and finance migrate there. The leading corporations serving this demand, including Google, Amazon, Microsoft, Facebook and Alibaba, are investing in the most advanced technology for optical fiber, switches and transceivers in order to support bandwidth-heavy services such as image and video sharing, collaboration andsearch.

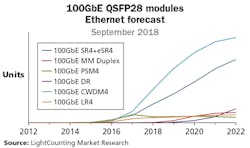

For example, in its September 2018 Ethernet Forecast, the market research and consulting firm LightCounting analyzed the impact of growing data traffic and the changing architecture of data centers on the market forecast for Ethernet optical transceivers, with a focus on the high-speed modules used in data centers. The firm highlights the explosive growth of 100-Gbit Ethernettransmission.

Two module types dominate the 100G space, namely the singlemode CWDM4 module and multimode SR4/eSR4 modules. Industry reports indicate that multimode fiber (MMF) transceivers based on the 100GBase-SR4 standard were deployed in some of the largest webscale data centers built in North America and China, while sales into the largest enterprise data centers has also begun. While CWDM4 transceivers have been widely deployed to support longer reach (>100 to 150 meters) links, very large numbers of multimode transceivers continue to be deployed in this marketspace.

This acceptance of 100-Gbit/sec modules portends the need for 400-Gbit/sec modules in the near future, as the industry’s largest influencers map out their strategies to transition to the higher speed. Hyperscale data center providers are making their intentions clear. In a paper presented at OFC in 2017, Google described the deployment of 40GBase-SR4 in its Jupiter architecture (“Data center interconnect and networking: From evolution to holistic revolution,” OFC 2017). In a panel discussion at OFC 2018, Google discussed application of 100GBase-SR4 in its current architecture. As an indication of its strong interest in this technology, an engineer from Google proposed the 400GBase-SR8 objective in the Next-Gen 200G and 400G over MMF Study Group that gave birth to the IEEE P802.3cm Task Force (“400G SR8 for data center interconnect,” January 20, 2018). This was significant, as it marked an increased interest in Ethernet standards by hyperscale data centercompanies.

Representatives of the largest Chinese technology corporations have also staked their claim in the evolution of 400-Gbit/sec standards. The proposal for the 400GBase-SR4.2 objective in the IEEE P802.3cm Task Force was co-authored by a technology director from Alibaba (“Broad market potential, economic feasibility, and distinct identity for a 400GBase-SR4.2 objective,” January 20, 2018), whose optics roadmap shown at CIOE in Shanghai in October 2017 predicts a reliance on 400 Gbits/sec using SR4.2 (“Challenges of optical interconnects for data centers,” CIOEC 2017). Similarly, in a presentation at Optinet 2018 in Beijing, Baidu announced that its networking roadmap includes both 100GBase-SR4 and 400GBase-SR4.2.

This analysis from LightCounting highlights the explosive growth of 100-Gbit/sec Ethernet transmission.

Multimode remains medium of choice

It was often assumed that hyperscale data center operations will rely solely on transmission over singlemode optical fiber for its ability to transmit to 500 meters and beyond. But in fact, multimode fiber and VCSEL-based transceivers continue to be deployed, and the latest 400-Gbit/sec IEEE specifications under development call for the use of multimodefiber.

Among optical fiber types, multimode continues to be the most cost-effective option for short-reach data center links up to 100 to 150 m. While the cost of multimode fiber itself is greater than that of singlemode, it is the optics and connection costs that dominate the total cost of a network system, surpassing variations in cable cost. On average, singlemode links continue to cost from 1.5 times to 5 times more than multimode links. As faster optoelectronic technology matures and volumes increase, prices come down for both, and the cost gap between multimode and singlemode is expected to decrease. However, singlemode links are expected to remain more expensive than their equivalent multimodecounterparts.

Multimode transceivers also consume less power than their singlemode counterparts, requiring lower drive currents, in the area of 5 to 10 milliamps (mA) compared to 50-60 mA for singlemode fiber. This is an important consideration, especially when assessing the cost of powering and cooling an individual switch or an entire data center. In a large data center with thousands of links, a multimode solution can provide substantial cost savings, from both transceiver and power/coolingperspectives.

What’s more, multimode fiber is much easier to install and terminate in the field. Relaxed alignment tolerances (up to 10 times lower) for multimode VCSELs and connectors compared to singlemode is an important consideration for enterprise environments, where frequent moves, adds and changes are required. This advantage extends to cleaning, where a small amount of dust or contamination can create significant attenuation on a singlemode connector, but only slightly increase the loss on a multimode link due to its much larger coresize.

These advantages remain when comparing multimode and singlemode for short-reach 400-GbE applications. In addition, gearbox functionality is needed to convert native 50-Gbit/sec PAM4 to 100-Gbit/sec PAM4 under the 400GBase-DR4 and -FR4 singlemode standards. There is no such requirement for multimodefiber.

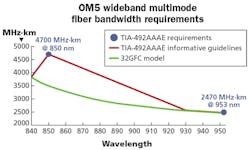

OM5 fiber’s modal bandwidth between 840 and 950 nm enables it to support multiple-wavelength transmission.

Fiber developments keep pace with demand

Multimode fiber continues to evolve to serve growing demands for speed and capacity. There are five types of multimode fiber currently on the market; the designations of all these fiber types begin with the prefix “OM” for “optical multimode.” OM1 and OM2, the original 62.5-micron (µm) and 50-µm diameter types, respectively, are considered obsolete in ISO/IEC 11801-1 and TIA 568.3-D standards, and are no longer included in the main text of these documents. They are, however, allowed as grandfathered fiber types and may be used to extend legacynetworks.

OM3 multimode, introduced in 2003, was the first fiber designed for use with VCSEL sources at 850 nanometers (nm), primarily to support 1- and 10-Gbit/sec operation. OM4, standardized in 2009, offers longer link lengths, supporting 10-Gbit/sec operation to 400m in the standard, and up to 550m using some engineeringrules.

The latest innovation was the introduction in 2017 of OM5, known as “wideband” multimode fiber. Traditionally, multimode fiber has operated at a single wavelength. When higher network speeds were needed, lasers were developed that would operate at these speeds. This approach worked very well up to 10 Gbits/sec and, later, 25 Gbits/sec. In order to increase speeds further, however, parallel fiber systems were introduced, first for 40 Gbits/sec, then for 100 Gbits/sec. Four fibers, or lanes, were used to support these higher-speedlinks.

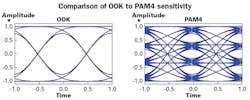

PAM-4 signaling (right) will require better receiver sensitivity than on-off keying signaling (left). Specifically, with PAM 4, the receiver will need to detect four “eyes” as opposed to the single “eye” in OOK signaling.

OM5 is the first multimode fiber designed to support multiple wavelengths. It enables duplex transmission of 100 Gbits/sec using multiple wavelengths between 850 and 950 nm. This is done while taking advantage of multimode fiber’s longer-wavelength transmission properties. The fiber’s low chromatic dispersion at longer wavelengths means that modal bandwidth requirements could be relaxed at those longerwavelengths.

The first widely deployed multi-wavelength application for OM5 fiber was the two-wavelength 40-Gbit/sec bidirectional (BiDi) transceiver. This was later followed by the four-wavelength short wavelength division multiplexing (SWDM) transceiver. Duplex (BiDi and SWDM) 40-Gbit/sec links are now in wide use. 100-Gbit/sec SWDM4 transceivers shipped in the fourth quarter of 2017, while 100-Gbit/sec BiDi modules became commercially available in January 2018. SWDM4 and BiDi duplex multimode solutions are especially attractive in brownfield installations and also have been adopted in new data center and enterprise systems. Craft familiarity with duplex LC connector-based solutions make these links popular in theindustry.

Recently, a multisource agreement (MSA) for 400-Gbit/sec BiDi was announced. This MSA will continue to build on the advantages of multiwavelength solutions with a four-fiber-pair 400-Gbit/sec link using two wavelengths. It will support link lengths of 70m on OM3 fiber, 100m on OM4 fiber and 150m using OM5 fiber. It is expected that transceivers that comply with this MSA will also be compliant with the 400GBase-SR4.2 IEEE standard when it isadopted.

This illustration indicates how 400GBase-SR4.2 optics can be operated in 4x100G “shuffle mode.”

PAM-4 signaling is another important innovation in the quest to increase the capacity of an optical fiber link. PAM-4 signaling is a more-complex signaling method than the simple on-off keying (OOK) previously used in optical transmission. Instead of simply transmitting a “1” or “0,” PAM-4 transmission doubles the amount of information that can be sent in a single time period by using four signal levels. PAM-4 signaling will be incorporated into new Ethernet and Fibre Channel standards, as well as MSAs and proprietary solutions, as speeds increase. It will allow for 50-Gbit/sec per lane transmission using today’s 25-Gbaud/sec lasers, and as laser speeds increase further, will allow even higher lanespeeds.

There are tradeoffs. PAM-4 signaling will require better receiver sensitivity than OOK in order to detect the different levels. As seen in the comparison illustration, four “eyes” will need to be detected, rather than the single eye found in OOK. The sensitivity requirements can be reduced using several compensation methods, including equalization and/or forward errorcorrection.

IEEE P802.3cm standard for 400G over multimode

The latest IEEE task force to be formed is IEEE 802.3cm, the Next-Generation Multimode Fiber Study Group. The committee has adopted two mainobjectives:

- 400 Gbits/sec over four multimode fiber pairs up to a distance of at least100m

- 400 Gbits/sec over eight multimode fiber pairs up to at least100m

IEEE 802.3cm will develop two new 400-Gbit/sec multimode physical medium dependent (PMD) to support theseobjectives.

The first PMD, 400GBase-SR8, will use eight pairs of multimode fiber, each pair carrying 50 Gbits/sec. This was based on a request from a hyperscale engineer who wanted the flexibility this solution offered, including breakout of 50-, 100-, and 200-Gbit/sec links, as well as 400-Gbit/sec switch-to-switch links. There will be two different media interfaces for 400GBase-SR8: a new 16-fiber multifiber push-on (MPO) connector that was recently standardized in TIA, and the older 24-fiber MPO connector that has two rows of 12fibers.

The second PMD is 400GBase-SR4.2. This will be the first standards-based application to take advantage of the wideband capabilities of OM5 fiber. The task force has established several important parameters, including use of a second wavelength (910 nm) and BiDi transmission. 400GBase-SR4.2 will be on the rough equivalent of packaging four 100-GbE BiDi modules into a QSFP-DD (double density) design. As stated earlier, it is expected that 400G-BiDi MSA modules will be compliant with 400GBase-SR4.2 upon completion of thestandard.

400GBase-SR4.2 optics offer a low-cost 100/150-meter point-to-point link but can be operated in 4x100-Gbit/sec breakout and shuffle mode as well. The 4x4 fiber shuffle allows the user to replace four 100-Gbit/sec ports with a single 400-Gbit/sec port on the switch, providing much higher bandwidth density on the switch faceplate and reducing the number of cabling assemblies required to support theswitch.

The committee’s work is proceeding quickly. Draft 1.2 has been released for comment; this includes technical details required for building prototype devices. It is expected that prototype parts will be available in early 2019. The finalized standard can be expected by the close of2019.

400GBase-SR8 optics offer maximum flexibility for shuffle applications. It also offers eight-way breakout capability to 50-Gbit/sec servers in a top-of-rack (ToR) configuration; it may also be implemented over a few meters in active optical cable (AOC).

As server speeds continue to increase, ToR switches could give way to middle-of-row (MoR) or end-of-row (EoR) switches as server counts in racks decrease, due to increasing power dissipation per server. In this case, cost-effective VCSEL optics over 20 to 30 meters of multimode fiber will replace copper direct attach cable (DAC) to 100-Gbit/secservers.

VCSEL-based links over multimode fiber will evolve to 100-Gbit/sec VCSELs sometime in 2021, initially to support short-reach server interconnects up to 30m. That same year should see the first application of 100-Gbit/sec VCSELs in 800GBase-SR8, either in point-to-point links or in 8x100-Gbit/sec breakout mode to connect an 800-Gbit/sec port on a MoR switch to 8x100-Gbit/sec server ports over100m.

In summary

There is growing interest in 100- and 400-Gbit/sec VCSEL-based multimode fiber links up to 100 to 150m for both webscale and large enterprise data centers. To ensure the viability of optical solutions for these demanding applications, the IEEE P802.3cm Task Force is writing standards for 400 Gbits/sec over eight-fiber-pair cable (SR8) and four-fiber-pair cable (SR4.2).

These solutions will take advantage of the historical cost and power benefits of multimode fiber and VCSEL transceivers, advantages that persist as the market moves to PAM4-based modulation. Technical development will continue, and it is anticipated that 100-Gbit/sec VCSELs will support 30m connections from servers to MoR/EoR switches, and 100m switch-switch links using multimode fiber cable in 2021. u

John Kamino is senior manager of multimode optical fiber product management for OFS (www.ofsoptics.com). He has published numerous articles in technical publications and has presented at many technical conferences. Kamino participates in TIA and IEEE standards-development activities.